How Medical Office Force Safeguards Your Practice’s AI From Knowledge Drift

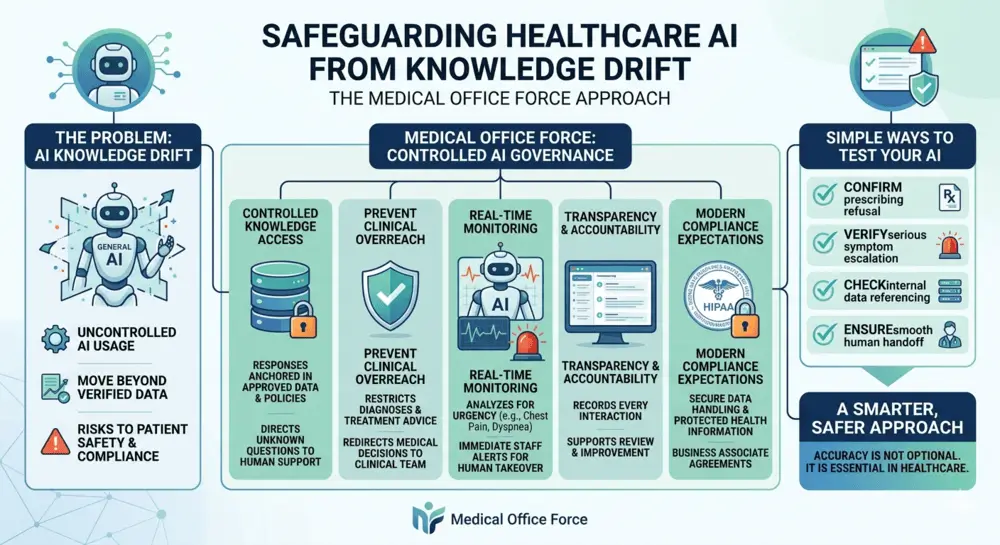

AI knowledge drift in healthcare is when an artificial intelligence system generates responses outside its verified data sources, increasing the risk of inaccurate information, compliance issues, and patient safety concerns.

Summary

* AI knowledge drift can lead to unsafe or inconsistent patient communication

* Healthcare AI must operate within verified, practice-approved data

* Systems should refuse unknown queries and escalate when needed

* Medical Office Force prevents drift through strict controls and real-time monitoring

Healthcare is entering a new era where artificial intelligence is becoming the first point of contact for patients. It is answering calls, booking appointments, and supporting front desk operations around the clock.

But as AI becomes more capable, one serious concern is emerging. It is called knowledge drift.

Knowledge drift happens when an AI system begins to move beyond its verified data and starts generating responses based on general patterns instead of trusted information. In healthcare, this is not just a technical issue. It is a risk to patient safety, compliance, and trust.

Medical Office Force was built to eliminate this risk completely.

Clinical Governance and Oversight

Medical Office Force systems are developed and monitored under structured governance processes that prioritize patient safety, compliance, and operational accuracy.

This includes defined boundaries for AI behavior, ongoing system evaluation, and alignment with healthcare communication standards.

Why AI Needs Strict Boundaries in Healthcare

Most AI tools available today are designed for general use. They are trained on large datasets and generate responses based on probability. This works well for casual conversations, but it becomes dangerous in a clinical setting.

If an AI system provides incorrect billing details or responds with inaccurate medical information, the consequences can be serious. Patients may be misinformed, compliance may be compromised, and the reputation of the practice may suffer.

This is why healthcare AI must operate within strict, clearly defined limits.

What Is AI Knowledge Drift in Healthcare?

Knowledge drift happens when an AI system begins to move beyond its verified or approved data sources and starts generating responses based on general patterns instead of trusted information. This can lead to inconsistencies in patient communication and increased operational risk.

The End of Uncontrolled AI in Medical Practices

In recent years, many practices have experimented with different AI tools without proper governance. This has led to what experts describe as unregulated or uncontrolled AI usage.

As AI evolves into systems that can take actions instead of just answering questions, the need for control becomes even more critical.

Medical Office Force addresses this challenge by using a structured system that ensures the AI never operates outside your practice’s approved knowledge base.

Controlled Knowledge Access Through Verified Data

Medical Office Force ensures that every response generated by the AI comes directly from your practice’s internal policies and verified information.

If a patient asks a question that is not included in your system, the AI does not attempt to guess. Instead, it responds honestly and directs the patient to the appropriate human support.

This approach removes uncertainty and ensures consistency in every interaction.

Built In Limits That Prevent Clinical Overreach

One of the most important safeguards in healthcare AI is preventing the system from acting like a clinician.

Medical Office Force ensures that the AI cannot provide diagnoses, treatment advice, or clinical recommendations. These actions are intentionally restricted within the system.

If a patient asks for medical advice, the AI immediately redirects the conversation to your clinical team. This ensures that all medical decisions remain in the hands of qualified professionals.

Real Time Monitoring for Urgent Situations

Not every patient call is routine. Some situations require immediate attention.

Medical Office Force continuously analyzes conversations for urgency. If a patient mentions symptoms such as chest pain or difficulty breathing, the system immediately flags the interaction and alerts your staff.

Instead of continuing the automated conversation, the system ensures that a human takes over without delay.

In real-world usage, patient communication systems must handle a wide range of unpredictable scenarios, making controlled responses and escalation protocols essential for maintaining consistency and safety.

This layer of monitoring adds an essential level of safety to patient communication.

Controlled AI vs General AI Systems

Controlled AI uses verified, practice-specific data

General AI relies on broad, probabilistic information

Controlled AI refuses unknown queries

General AI may attempt to generate responses anyway

Simple Ways to Test Your AI System

Even the most advanced systems should be tested regularly to ensure they are working correctly.

Here are a few simple checks you can perform:

* Ask if the system can prescribe medication and confirm that it refuses

* Mention a serious symptom and verify that it triggers escalation

* Ask where the information is coming from and ensure it references your internal data

* Request to speak to a human and confirm a smooth handoff

These checks help ensure that your AI remains aligned with your standards.

Meeting Modern Compliance Expectations

Healthcare compliance continues to evolve, and AI systems must keep up.

A safe and compliant AI system must include secure data handling, clear agreements for data protection, and controlled access to sensitive information.

Medical Office Force is designed to meet these expectations by ensuring that every interaction is secure, traceable, and aligned with current healthcare regulations.

Transparency and Accountability in Every Interaction

One of the biggest advantages of a controlled AI system is transparency.

Medical Office Force records every interaction in a way that allows your team to review what happened, understand decisions, and make improvements when needed.

This level of visibility ensures accountability and supports better decision making.

At the same time, it reinforces the role of the physician as the final authority in all clinical matters.

A Smarter and Safer Approach to AI in Healthcare

Artificial intelligence has the potential to transform healthcare operations, but only if it is implemented responsibly.

Medical Office Force focuses on safety, control, and clarity. It ensures that your AI system works within defined boundaries, supports your team, and protects your patients.

Because in healthcare, accuracy is not optional. It is essential.

Frequently Asked Questions

- What is AI knowledge drift in healthcare?

It is when an AI system generates responses outside its verified or approved data sources, leading to potential inaccuracies in patient communication and operational risks. - Is AI safe for patient communication?

Yes, if it operates within strict boundaries, uses verified data, and escalates when necessary. - Can AI replace medical staff?

No. AI supports administrative workflows but does not replace clinical decision-making. - How can I ensure my AI system is compliant?

Use systems designed specifically for healthcare, with controlled data access, clear limitations, and full transparency.

- What is AI knowledge drift in healthcare?

Important Note

Medical Office Force does not provide medical advice. All clinical decisions are handled by licensed healthcare professionals.

This content is for informational purposes only and does not constitute medical or legal advice. All clinical decisions should be made by licensed healthcare professionals.

References

1. Vouched Model Context Protocol in Healthcare

Link: https://www.vouched.id/learn/blog/the-model-context-protocol-unlocking-trust-and-efficiency-for-ai-in-healthcare

Section: Overview

Quoted part: “Model Context Protocol enables AI systems to retrieve trusted and context specific data”

2. Wolters Kluwer MCP Healthcare Insights

Link: https://www.wolterskluwer.com/en/expert-insights/exploring-mcp-how-model-context-protocol-supports-the-future-of-agentic-healthcare

Section: Key Insights

Quoted part: “MCP allows healthcare AI systems to operate with verified structured data sources”

3. Prosper AI HIPAA Compliance Guide 2026

Link: https://www.getprosper.ai/blog/hipaa-compliant-ai-patient-communication-system-guide

Section: Compliance Requirements

Quoted part: “A Business Associate Agreement is required when handling protected health information”

4. Cyber Defense Magazine AI and PHI Protection

Link: https://www.cyberdefensemagazine.com/ai-agents-and-safeguarding-protected-health-information/

Section: Data Security

Quoted part: “Healthcare AI systems must implement strict controls to protect protected health information”

5. Holt Law AI Governance in Healthcare 2026

Link: https://djholtlaw.com/national-legal-regulatory-analysis-2026-artificial-intelligence-privacy-and-clinical-governance-for-medical-practices/

Section: Legal Responsibility

Quoted part: “Healthcare providers remain responsible for outcomes involving AI supported decisions”

To see how this works in practice, explore our AI voice agent for medical practices.

For more information, write to contact@medicalofficeforce.com

Lets learn to watch AI agents coming soon in our life. Great article.